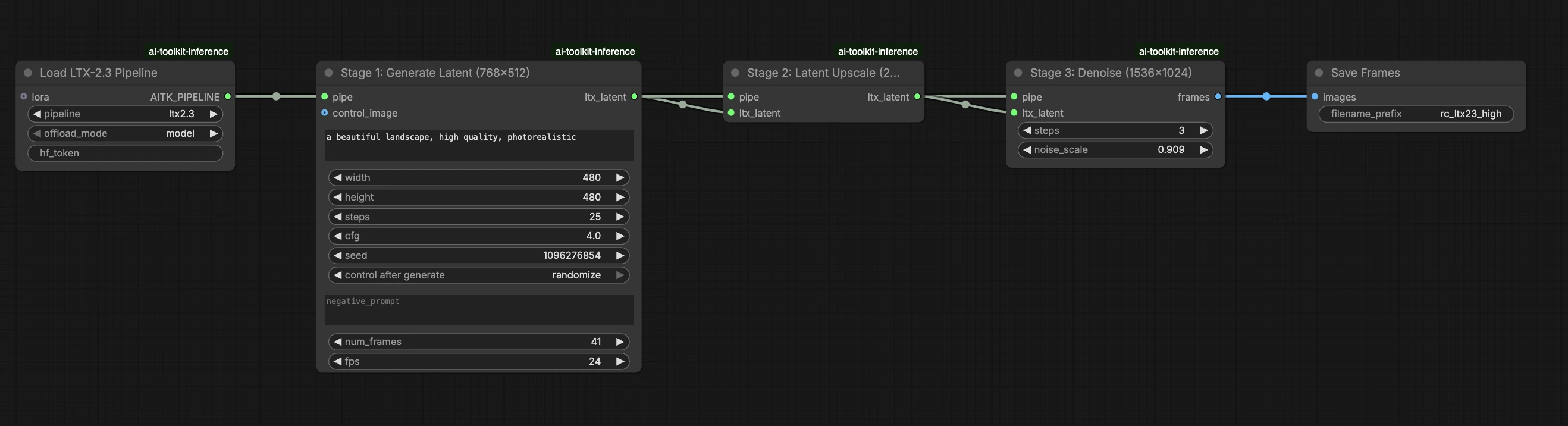

LTX 2.3 LoRA ComfyUI Inference: training-matched AI Toolkit LoRA output with the LTX 2.3 pipeline#

This production-ready RunComfy workflow runs LTX 2.3 LoRA inference in ComfyUI through RC LTX 2.3 (LTX2Pipeline) (pipeline-level alignment, not a generic sampler graph). RunComfy built and open-sourced this custom node—see the runcomfy-com repositories—and you control adapter application with lora_path and lora_scale.

Note: This workflow requires a 2X Large or larger machine to run.

Why LTX 2.3 LoRA ComfyUI Inference often looks different in ComfyUI#

AI Toolkit training previews are rendered through a model-specific LTX 2.3 pipeline, where text encoding, scheduling, and LoRA injection are designed to work together. In ComfyUI, rebuilding LTX 2.3 with a different graph (or a different LoRA loader path) can change those interactions, so copying the same prompt, steps, CFG, and seed still produces visible drift. The RunComfy RC pipeline nodes close that gap by executing LTX 2.3 end-to-end in LTX2Pipeline and applying your LoRA inside that pipeline, keeping inference aligned with preview behavior. Source: RunComfy open-source repositories.

How to use the LTX 2.3 LoRA ComfyUI Inference workflow#

Step 1: Get the LoRA path and load it into the workflow (2 options)#

Option A — RunComfy training result → download to local ComfyUI:

- Go to Trainer → LoRA Assets

- Find the LoRA you want to use

- Click the ⋮ (three-dot) menu on the right → select Copy LoRA Link

- In the ComfyUI workflow page, paste the copied link into the Download input field at the top-right corner of the UI

- Before clicking Download, make sure the target folder is set to ComfyUI > models > loras (this folder must be selected as the download target)

- Click Download — this ensures the LoRA file is saved into the correct

models/lorasdirectory - After the download finishes, refresh the page

- The LoRA now appears in the LoRA select dropdown in the workflow — select it

Option B — Direct LoRA URL (overrides Option A):

- Paste the direct

.safetensorsdownload URL into thepath / urlinput field of the LoRA node - When a URL is provided here, it overrides Option A — the workflow loads the LoRA directly from the URL at runtime

- No local download or file placement is required

Tip: confirm the URL resolves to the actual .safetensors file (not a landing page or redirect).

Step 2: Match inference parameters with your training sample settings#

In the LoRA node, select your adapter in lora_path (Option A), or paste a direct .safetensors link into path / url (Option B overrides the dropdown). Then set lora_scale to the same strength you used during training previews and adjust from there.

Remaining parameters are on the Generate node (and, depending on the graph, the Load Pipeline node):

prompt: your text prompt (include trigger words if you trained with them)width/height: output resolution; match your training preview size for the cleanest comparison (multiples of 32 are recommended for LTX 2.3)num_frames: number of output video framessample_steps: number of inference steps (30 is a common default)guidance_scale: CFG/guidance value (5.5 is a common default; don't exceed 7)seed: fixed seed to reproduce; change it to explore variationsseed_mode(only if present): choosefixedorrandomizeframe_rate: output FPS; keep consistent with training settings for motion alignment

Training alignment tip: if you customized sampling values during training (seed, guidance_scale, sample_steps, trigger words, resolution), mirror those exact values here. If you trained on RunComfy, open Trainer → LoRA Assets > Config to view the resolved YAML and copy preview/sample settings into the workflow nodes.

Step 3: Run LTX 2.3 LoRA ComfyUI Inference#

Click Queue/Run — the SaveVideo node writes results to your ComfyUI output folder.

Quick checklist:

- ✓ LoRA is either: downloaded into

ComfyUI/models/loras(Option A), or loaded via a direct.safetensorsURL (Option B) - ✓ Page refreshed after local download (Option A only)

- ✓ Inference parameters match training

sampleconfig (if customized)

If everything above is correct, the inference results here should closely match your training previews.

Troubleshooting LTX 2.3 LoRA ComfyUI Inference#

Most LTX 2.3 "training preview vs ComfyUI inference" gaps come from pipeline-level differences (how the model is loaded, scheduled, and how the LoRA is merged), not from a single wrong knob. This RunComfy workflow restores the closest "training-matched" baseline by running inference through RC LTX 2.3 (LTX2Pipeline) end-to-end and applying your LoRA inside that pipeline via lora_path / lora_scale (instead of stacking generic loader/sampler nodes).

(1) LoRA shape mismatches or "key not loaded" warnings#

Why this happens The LoRA was trained for a different model family or a different LTX variant. You'll see many lora key not loaded lines and potentially shape mismatch errors.

How to fix (recommended)

- Make sure the LoRA was trained specifically for LTX 2.3 with AI Toolkit (LTX 2.0 / 2.1 / 2.2 LoRAs are not interchangeable).

- Keep the graph "single-path" for LoRA: load the adapter only through the workflow's

lora_pathinput and let LTX2Pipeline handle the merge. Don't stack an additional generic LoRA loader in parallel. - If you already hit a mismatch and ComfyUI starts producing unrelated CUDA/OOM errors afterward, restart the ComfyUI process to fully reset the GPU + model state, then retry with a compatible LoRA.

(2) Inference results don't match training previews#

Why this happens Even when the LoRA loads, results can still drift if your ComfyUI graph doesn't match the training preview pipeline (different defaults, different LoRA injection path, different scheduling).

How to fix (recommended)

- Use this workflow and paste your direct

.safetensorslink intolora_path. - Copy the sampling values from your AI Toolkit training config (or RunComfy Trainer → LoRA Assets Config):

width,height,num_frames,sample_steps,guidance_scale,seed,frame_rate. - Keep "extra speed stacks" out of the comparison unless you trained/sampled with them.

(3) Using LoRAs significantly increases inference time#

Why this happens A LoRA can make LTX 2.3 much slower when the LoRA path forces extra patching/dequantization work or applies weights in a slower code path than the base model alone.

How to fix (recommended)

- Use this workflow's RC LTX 2.3 (LTX2Pipeline) path and pass your adapter through

lora_path/lora_scale. In this setup, the LoRA is merged once during pipeline load (AI Toolkit-style), so the per-step sampling cost stays close to the base model. - When you're chasing preview-matching behavior, avoid stacking multiple LoRA loaders or mixing loader paths. Keep it to one

lora_path+ onelora_scaleuntil the baseline matches.

(4) OOM errors on large resolutions or long videos#

Why this happens LTX 2.3 is a 22B parameter model and video generation is VRAM-intensive. High resolutions or many frames can exceed GPU memory, especially with LoRA overhead.

How to fix (recommended)

- Use a 2X Large (80 GB VRAM) or larger machine. This workflow is not compatible with Medium, Large, or X Large machines.

- Reduce resolution or frame count if you need to iterate quickly, then scale up for final renders.

- Enable VAE tiling if available — it can save ~3 GB VRAM with minimal quality loss.

Run LTX 2.3 LoRA ComfyUI Inference now#

Open the workflow, set lora_path, and click Queue/Run to get LTX 2.3 LoRA results that stay close to your AI Toolkit training previews.