Generate realistic videos with synced audio from text using OpenAI Sora 2.

Generate realistic videos with synced audio from text using OpenAI Sora 2.

Create dynamic, sound-synced motion clips from visuals for rich storytelling.

AI-driven tool for seamless object separation and smooth video compositing.

Generate videos from text prompts with audio using Wan 2.5 Preview.

Generate cinematic video from images with 4K detail, fluid motion, and audio sync.

Text-driven video transformation keeping motion and style consistent across edits.

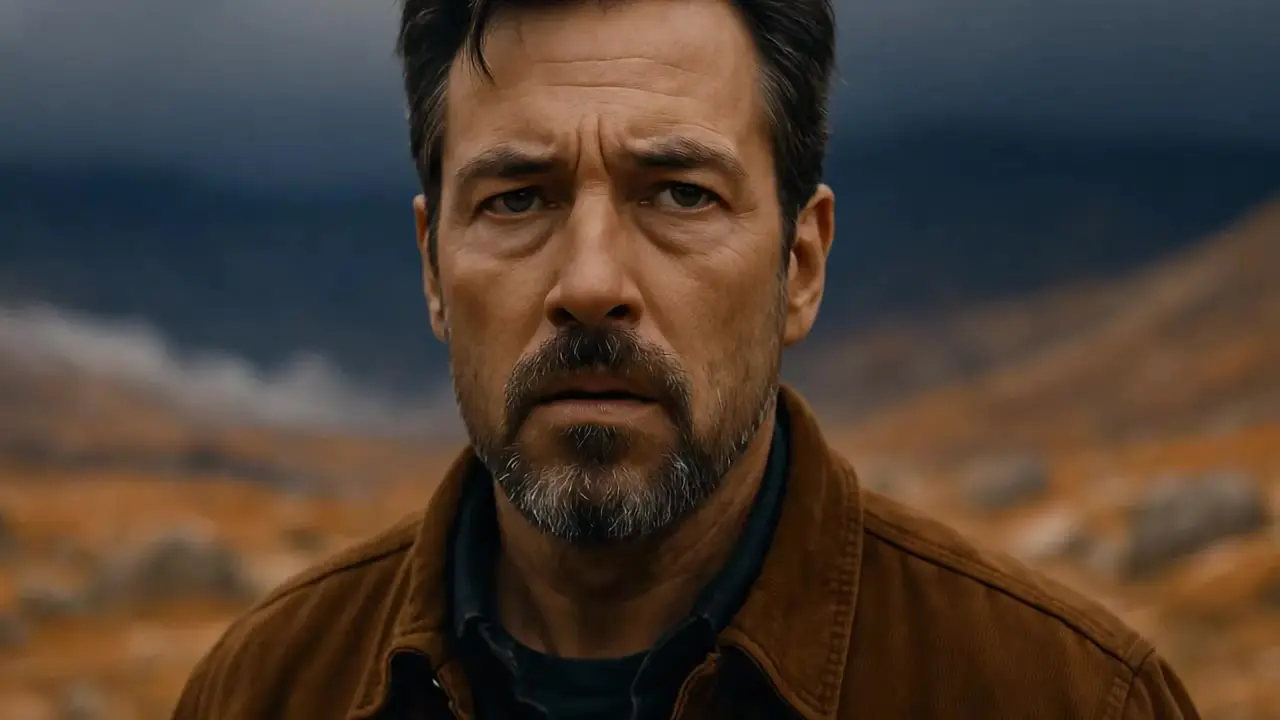

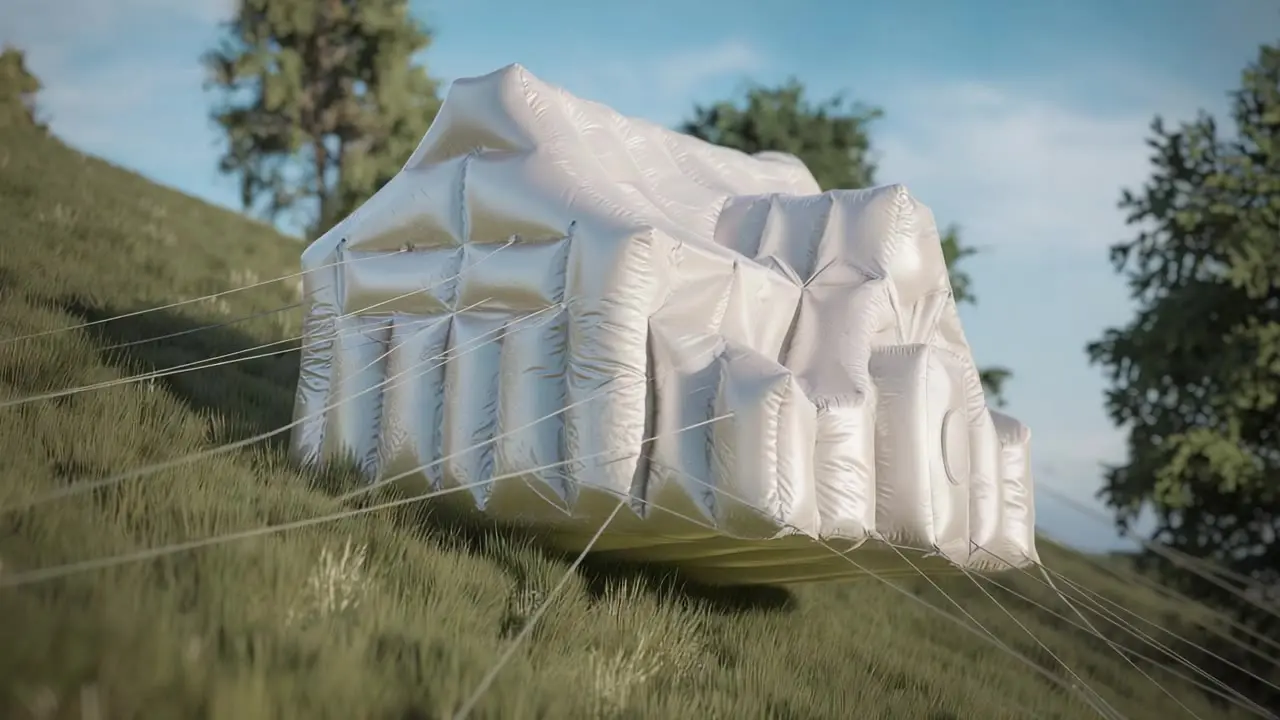

Runway Gen-4 is the latest iteration of Runway's AI video generation model. Compared to earlier versions like Gen-2 and Gen-3 Alpha, Runway Gen-4 shows improved ability to maintain visual consistency within a single clip—for example, keeping a character or object stable as the camera angle changes or the scene progresses.

The model also includes updates to motion rendering and overall scene coherence. While motion in Runway Gen-4 can appear more fluid than in previous versions, outcomes still vary depending on the complexity of the input and prompt, and occasional inconsistencies remain.

Runway Gen-4 requires two inputs: an image (which serves as the base visual and first frame) and a text prompt describing the desired action or motion. The model does not currently support generation from text alone, which limits flexibility compared to some text-to-video tools.

Text prompts can be up to 1,000 characters long. In practice, prompts that focus on motion or camera direction tend to work best, since the image already defines the visual appearance. Both photos and AI-generated images can be used, though the quality of the output is still highly dependent on the clarity of the prompt and the content of the image.

Runway Gen-4 supports video outputs of 5 or 10 seconds at 24 frames per second. The standard resolution is 1280×720 pixels (720p) in a 16:9 aspect ratio, with other formats like vertical (9:16) and square (1:1) also available.

While Gen-4 delivers higher quality than its predecessors, the limited duration and resolution can be restrictive for more complex storytelling or commercial use. Creating longer sequences still requires stitching together multiple short clips.

Runway Gen-4 is particularly suited for concept development, visual experimentation, and prototyping. It has potential applications in storyboarding, music video production, visual art projects, and other creative workflows where short, stylized clips are sufficient.

Runway Gen-4 shows improvements over earlier models such as Gen-2 and Gen-3 Alpha, particularly in terms of visual consistency within individual clips and smoother motion generation. The model also shows better alignment with user prompts, producing results that more closely reflect the described actions or camera movements.

Despite advancements, Runway Gen-4 has several limitations to be aware of:

Clip Duration: Each video is limited to 5 or 10 seconds. Producing longer content requires stitching clips together, which takes additional time and planning.

Image Input Requirement: You must provide an input image to start generation. Gen-4 does not support text-only video generation, which adds an extra step and can limit spontaneity.

Cross-Scene Consistency: While Gen-4 performs reasonably well within single clips, keeping a character or visual style consistent across multiple clips remains challenging.

Visual Artifacts: As with many generative models, unexpected visual glitches or misinterpretations can occur. Objects may be distorted, or prompts may be only partially followed. Complex scenes can require several attempts to achieve a usable result.

Currently, Runway Gen-4 can often preserve a character’s appearance within a single short clip. However, it lacks a reliable solution for maintaining that consistency across multiple video clips. To improve continuity, creators may need to prepare consistent reference images for each scene, but even then, achieving a stable look across several clips remains challenging. Until dedicated features are introduced, maintaining narrative consistency will likely require manual workarounds and post-production editing.

RunComfy is the premier ComfyUI platform, offering ComfyUI online environment and services, along with ComfyUI workflows featuring stunning visuals. RunComfy also provides AI Models, enabling artists to harness the latest AI tools to create incredible art.