ComfyUI-INT8-Fast Introduction

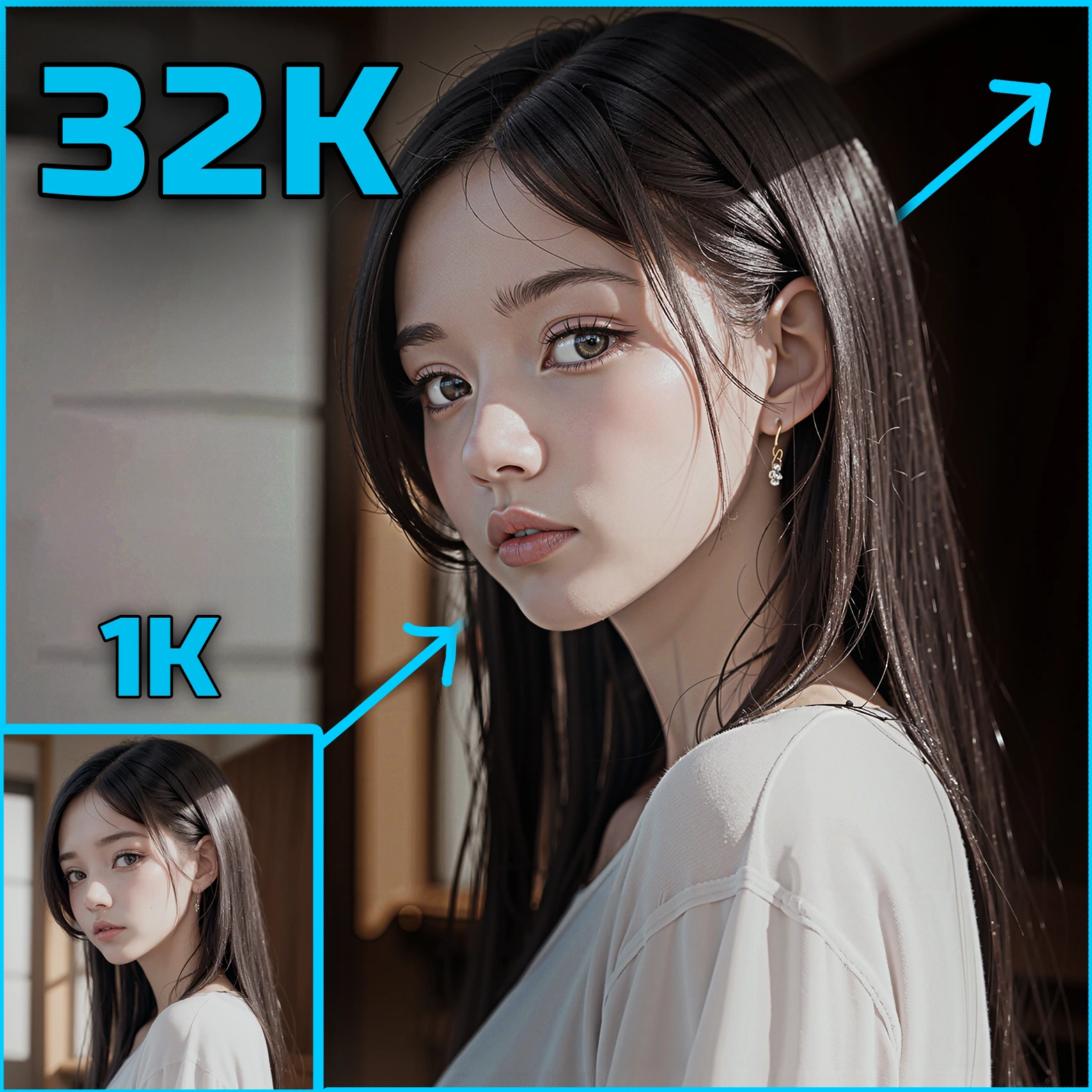

ComfyUI-INT8-Fast is an extension designed to accelerate the performance of AI models within the ComfyUI framework by utilizing INT8 quantization. This technique significantly speeds up the inference process, making it possible to achieve between 1.5 to 2 times faster processing on compatible NVIDIA GPUs, such as the 3090. This extension is particularly beneficial for AI artists who work with large models and require faster processing times without compromising too much on quality. By converting models to use INT8 precision, ComfyUI-INT8-Fast helps in reducing computational load, thus enabling quicker iterations and more efficient workflows.

How ComfyUI-INT8-Fast Works

At its core, ComfyUI-INT8-Fast leverages a process called quantization, which reduces the precision of the numbers used in model computations from floating-point (FP) to integer (INT) formats. Specifically, it uses INT8 quantization, which means that the model weights and activations are represented using 8-bit integers instead of the usual 32-bit floating-point numbers. This reduction in precision decreases the amount of data that needs to be processed, leading to faster computation times. Think of it like using a shorthand version of a language to communicate more quickly, while still conveying the essential meaning. This approach is particularly effective on GPUs that support INT8 operations, allowing for significant speed improvements.

ComfyUI-INT8-Fast Features

ComfyUI-INT8-Fast offers several features that enhance its usability and performance:

- INT8 LoRA Node: This feature allows for faster inference by applying LoRA (Low-Rank Adaptation) using INT8 precision. While this may slightly reduce the quality of the output, it significantly boosts speed, making it ideal for quick iterations.

- Dynamic LoRA Node: Offers a balance between speed and quality by performing dynamic calculations. This option is slightly slower than the INT8 LoRA Node but can provide better quality results.

- Pre-quantized Checkpoints: The extension supports pre-quantized model checkpoints, which are optimized for INT8 operations. These checkpoints are recommended for most architectures to achieve the best performance.

ComfyUI-INT8-Fast Models

The extension supports a variety of models, each tailored for specific tasks and performance needs:

- FLUX.2-klein-base-9b and 4b: These models are optimized for INT8 operations and are suitable for tasks requiring high-speed processing.

- Chroma1-HD and Z-Image Models: These models are designed for high-definition image processing and are available in INT8 formats to enhance speed without significantly compromising quality.

- Anima Model: This model is tailored for creative applications, offering a balance between speed and artistic output.

Each model can be downloaded from the provided links, ensuring easy access and integration into your workflow.

Troubleshooting ComfyUI-INT8-Fast

If you encounter issues while using ComfyUI-INT8-Fast, here are some common problems and solutions:

- Model Compatibility: Ensure that your GPU supports INT8 operations. The extension is optimized for NVIDIA GPUs with sufficient INT8 TOPS (Tera Operations Per Second).

- Quality vs. Speed: If you notice a drop in quality, consider using the Dynamic LoRA Node for a better balance between speed and output quality.

- Installation Issues: Make sure that all dependencies, such as Triton and the latest version of ComfyKitchen, are correctly installed. This is crucial for the extension to function properly.

Learn More about ComfyUI-INT8-Fast

To further explore the capabilities of ComfyUI-INT8-Fast, you can access additional resources such as tutorials and community forums. These platforms provide valuable insights and support, helping you make the most of the extension. Engaging with the community can also offer practical tips and solutions to enhance your creative projects.