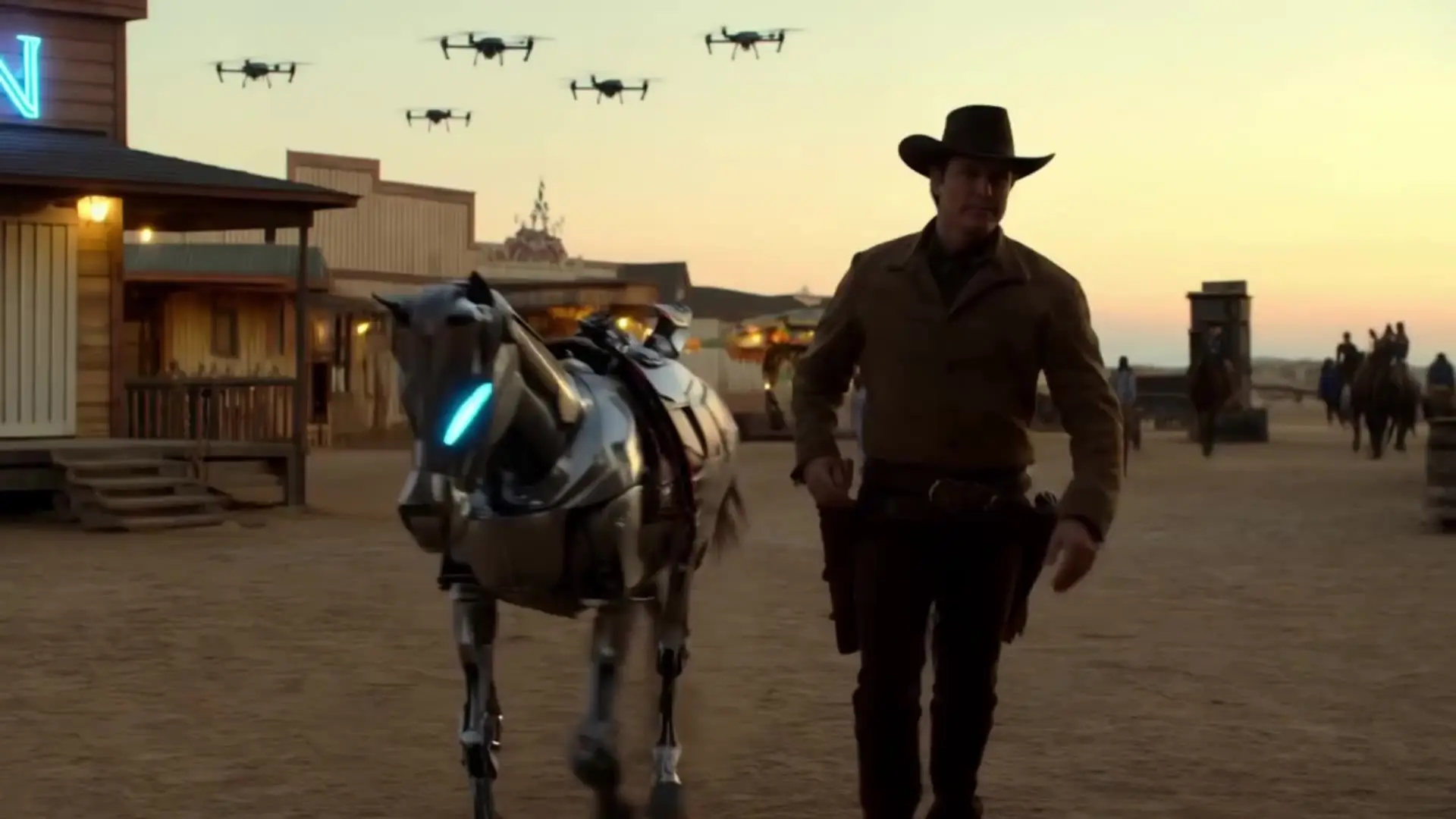

Create rapid high-quality video drafts with precise style and speed

Seedance 2.0 Fast is a speed-oriented multimodal text-to-video model from ByteDance Seed that turns scene descriptions and optional references into short cinematic clips. On RunComfy you drive generation with a prompt plus optional images (up to 9), videos (up to 3), and audio (up to 3) for multimodal reference mode, and you can set aspect ratio, duration, resolution, generate audio, seed, and optional tools (e.g. [{ "type": "web_search" }] to allow online search when the model chooses).

type: web_search so the model can search the web when needed; check usage.tool_usage.web_search on the task query response for how many searches ran.

Create rapid high-quality video drafts with precise style and speed

Generate native 4K cinematic text-to-video with synchronized dialogue and consistent characters.

First-frame restyle locks cinematic look across full AI video.

Add instant visual effects to a single image and export as a video.

Features smooth scene transitions, natural cuts, and consistent motion.

Create rich cinematic clips from images or text with Veo 3.1 Fast.

Seedance 2.0 Fast is ByteDance Seed’s speed-oriented multimodal video model: you describe the scene in text and can add images, reference video, and reference audio so the model aligns motion, look, and sound. On RunComfy it is tuned for faster turnaround while still supporting cinematic short clips, optional native audio, lip-sync-friendly results, and camera control when you steer the shot in your prompt.

Resolution is 480p or 720p (default). Aspect ratio defaults to adaptive (the model picks the closest match; the task result shows the actual output ratio). You can also fix it to 16:9, 9:16, 4:3, 3:4, 1:1, or 21:9.

Only the prompt is required; references are optional but define multimodal reference mode when you add them. Prompt: about ≤500 Chinese characters or ≤1000 English words is recommended. Images: up to 9 (jpeg, png, webp, bmp, tiff, gif). Reference videos: up to 3 (mp4, mov), each 2–15 seconds. Reference audio: up to 3 (wav, mp3), each 2–15 seconds and under 15 MB. Clear prompts plus aligned references usually give the steadiest identity, style, and sync.

Duration is an integer 4–15 seconds (default 5). Choose any whole-second value in that range per job.

With Generate audio (generate_audio) set to true (the default), the model can output video with synchronized audio (dialogue, SFX, or music). Set it to false for silent video. Lip-sync quality depends on how explicitly you describe speech, framing, and timing in the prompt; reference audio can also guide rhythm or tone when you use multimodal references.

Choose Fast when you care most about shorter wait times and rapid iteration—same playground inputs (prompt, image_url, video_url, audio_url, aspect_ratio, duration, resolution, generate_audio, seed), with 480p/720p output here and full multimodal reference limits. Choose Pro when you want the highest cinematic fidelity the provider offers for a shot. A/B test both on the same prompt and references to judge quality vs. speed for your pipeline.

Seedance 2.0 Fast focuses on short cinematic clips with rich multimodal references (many images plus optional video and audio), 4–15 s duration, adaptive or fixed aspect ratios, and built-in audio when generate_audio is on. Gains are use-case dependent; compare on your own prompts, characters, and reference packs rather than relying on a single benchmark.

It depends on budget, latency, and the kind of motion you need. On RunComfy, Seedance 2.0 Fast emphasizes fast multimodal text-to-video with up to nine images, three reference videos, three audio references, 480p/720p presets, and a generate audio toggle. Wan 2.5 and Kling 2.6 differ in pricing, limits, and strengths—run parallel tests on your typical briefs and reference sets.

Mirror the playground Input schema: prompt, image_url, video_url, audio_url, aspect_ratio, duration, resolution, generate_audio, and seed. Enforce the same prompt and media limits in your app, then authenticate with your API key and credits for batch or automated jobs.

Commercial use depends on ByteDance’s licensing for the model and RunComfy’s terms of service. Read the official model license and RunComfy docs, or email hi@runcomfy.com before using generated footage in paid campaigns, client work, or wide distribution.

Creators and teams who need quick multimodal video generation: social and ad concepts, storyboards and previs, branded shorts, and iterate-heavy workflows where speed matters as much as a single “hero” frame. Pair text with references when you need repeatable look, character, or audio-aware motion—then upgrade to Pro for final polish when the shot warrants it.

RunComfy is the premier ComfyUI platform, offering ComfyUI online environment and services, along with ComfyUI workflows featuring stunning visuals. RunComfy also provides AI Models, enabling artists to harness the latest AI tools to create incredible art.