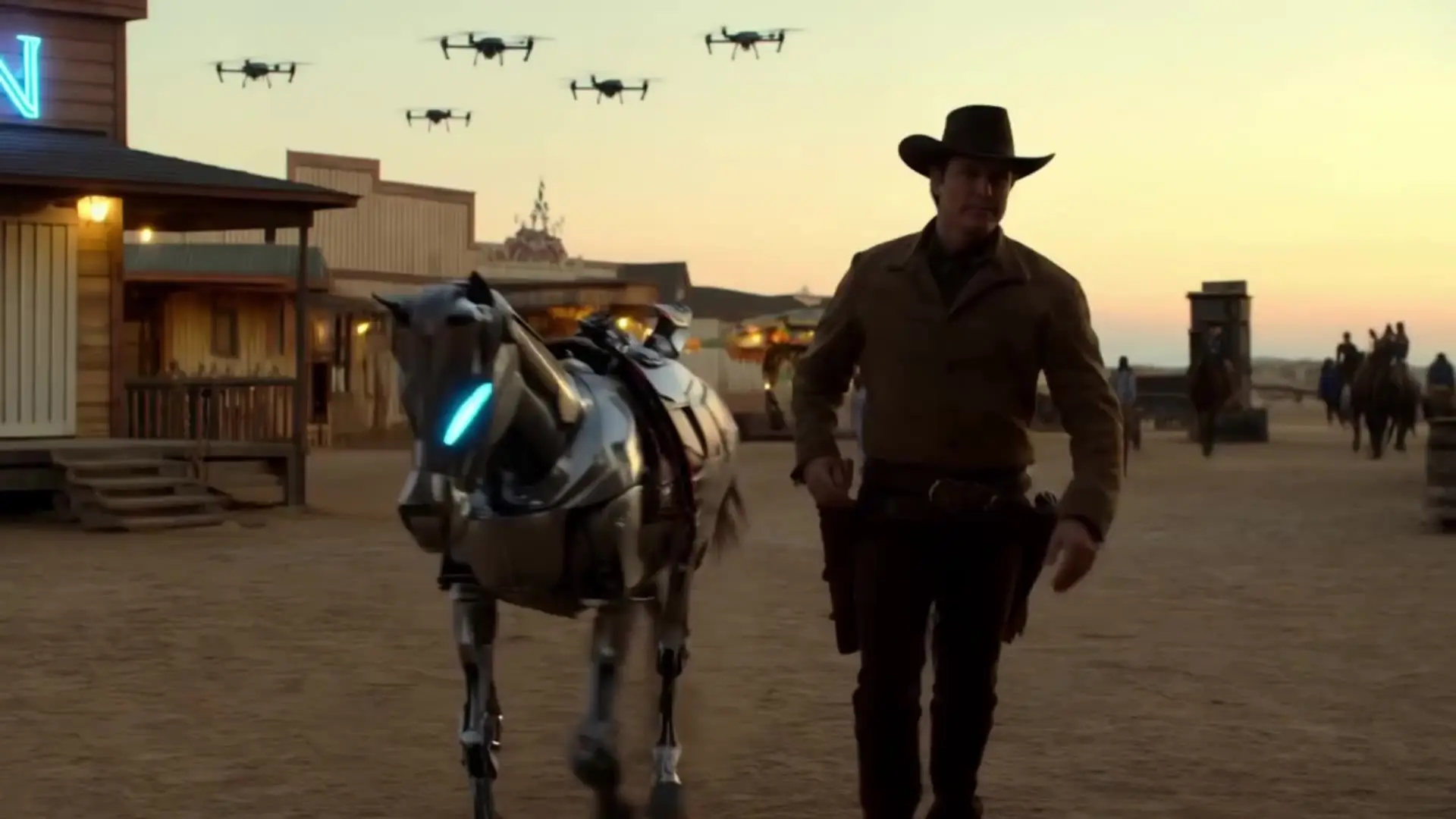

Generate cinematic clips faster with multimodal references, lip-sync, and camera control

This template on RunComfy uses Alibaba's async video-synthesis API with the happyhorse-1.0-r2v model. You upload 1 to 9 reference images, refer to each one in the prompt as character1, character2, character3 … in the order they appear, and the model fuses those subjects into a single coherent video while preserving identity, color, materials, and composition.

Instead of choosing between text-to-video freedom and image-to-video fidelity, the model lets you bring a cast — a character, an outfit, a prop, an accessory — into one prompt and direct them with natural language. Powered by a 15B-parameter unified Transformer with DMD-2 distillation, the model delivers 1080p output at competitive speed without sacrificing facial fidelity, garment detail, or scene continuity.

Output format: video / resolution tier: 720P or 1080P / duration: 3–15 seconds / aspect ratio: 16:9, 9:16, 1:1, 4:3, 3:4 / reference images: 1–9 per generation

| Parameter | Required | Type | Default | Range / Options | Description |

|---|---|---|---|---|---|

| image_url_1* | Yes | string | — | JPEG, JPG, PNG, WEBP | First reference image, tagged as character1 in the prompt. |

| image_url_2 … image_url_9 | No | string | — | JPEG, JPG, PNG, WEBP | Optional additional reference images, tagged as character2 … character9. |

| prompt* | Yes | string | — | max 2500 Chinese / 5000 non-Chinese chars | Scene, motion, camera, lighting; use character1/character2/… to reference each image. |

| aspect_ratio | No | string | 16:9 | 16:9, 9:16, 1:1, 4:3, 3:4 | Output aspect ratio. |

| resolution | No | string | 1080P | 720P, 1080P | Output video resolution tier. |

| duration | No | integer | 5 | 3–15 | Output video duration in seconds. |

| seed | No | integer | 0 | 0 to 2147483647 | Optional random seed. Use 0 to let the provider choose one automatically. |

| watermark | No | boolean | false | true, false | Whether to include the provider watermark on the generated video. |

Generate cinematic clips faster with multimodal references, lip-sync, and camera control

Refined AI visuals, real-time control, and pro FX for creators

Realistic motion, dynamic camerawork, and improved physics.

Master complex motion, physics, and cinematic effects.

Create high quality videos from text prompts using Pika 2.2.

Features smooth scene transitions, natural cuts, and consistent motion.

HappyHorse 1.0 Reference to Video is the multi-image subject-to-video mode of HappyHorse 1.0 — the #1 Arena-ranked video model (Elo 1392). It accepts 1 to 9 reference images plus a text prompt that tags each subject as character1, character2, character3 …, then fuses them into a single coherent 720P/1080P clip with stable identity, outfit, and prop fidelity.

Text-to-video starts from words only; image-to-video animates one source frame; reference-to-video brings multiple subjects (a person, a costume, an accessory, a prop) into the same generation and lets you direct them with one prompt. It combines the freedom of text prompting with the identity-locking strength of reference images.

The reference order is fixed by upload position. Image 1 is character1, image 2 is character2, image 3 is character3, and so on up to character9. In your prompt you write something like “character1 wearing character2, holding character3, walking through a sunlit corridor” — the model binds each tag to the matching reference image.

The model outputs native 720P or 1080P clips with selectable durations from 3 to 15 seconds, across 16:9, 9:16, 1:1, 4:3, and 3:4 aspect ratios. Output quality is suitable for ad delivery and social publishing without re-grading.

Each reference image must be JPEG, JPG, PNG, or WEBP, with a short side of at least 400 pixels (720P or higher recommended) and a file size under 10MB, served from a public HTTP/HTTPS URL. Avoid blurry, heavily compressed, or watermarked sources — sharp, well-lit references give the model the best chance to lock identity.

Anchor each character tag in one sentence, then describe motion and camera language: drift, dolly in, orbit, tilt up, push, reveal. State what must stay locked (face, outfit, packaging), add lighting evolution for a cinematic feel, and keep each clip to one clear visual beat. Reuse the same seed when comparing prompt or reference variants.

The model is ideal for multi-character storytelling, virtual try-on with prop swaps, character + outfit + accessory videos, brand asset assembly, packaging-to-presentation transitions, and cinematic ad teasers where you already have a cast of reference assets and need them moving together with stable identity.

RunComfy is the premier ComfyUI platform, offering ComfyUI online environment and services, along with ComfyUI workflows featuring stunning visuals. RunComfy also provides AI Models, enabling artists to harness the latest AI tools to create incredible art.